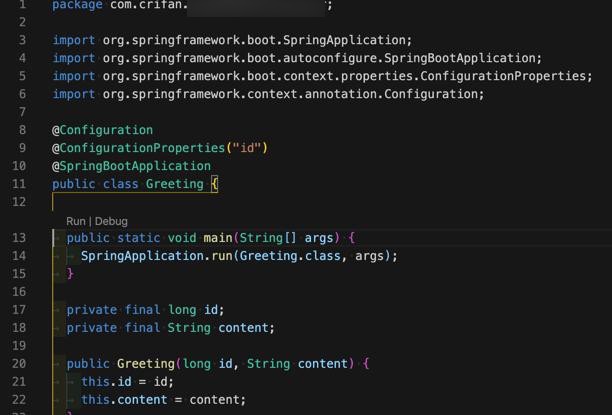

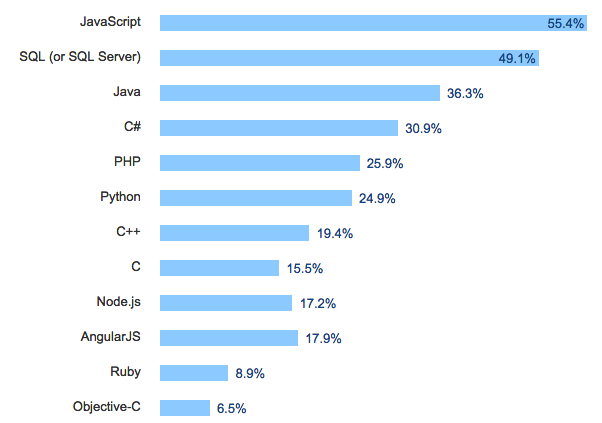

Not as robust and efficient as Python for CPU-heavy tasks, such as parsing large amounts of web data after collecting.Not easy to understand especially for less experienced developers.For Input / Output (I/O) tasks which require a constant input and output flow, Node.js performs better than Python and minimizes the user’s wait time.Node.js can handle concurrent web page queries at the same time very efficiently.Compared to Python and Ruby, which are the other two languages we cover in this article, JavaScript has the highest number of questions and therefore resources on Stackoverflow. Large community and availability of support through online forums and tutorials.We recommend using JavaScript with Node.js for web scraping to more experienced developers, especially if they have some experience with JavaScript. Node.js offers libraries such as Puppeteer and Nightmare which are commonly used for web scraping.Īccording to a 2019 research comparing Python and Node.JS libraries for web scraping tasks, Puppeteer performed more efficiently than other options. With the help of the Node.js environment, it is used a lot more for developing web applications as well. Most popular programming language in 2021 according to Github, JavaScript, was originally built for front-end web development. We summarized the pros and cons of some of three commonly used programming languages for web scraping and also low-code or no-code alternatives at the end of the article. A second consideration that we mention throughout this article as well is the availability of online resources for solving a bug or looking up for alternative coding solutions for your problem. Web data often comes in complex formats and the structure of web pages can change frequently which requires developers to adjust their code.įamiliarity with a programming language should be the main consideration since the scraping itself can be supported in almost any language. The best programming language for a developer to build a web scraper is the language that they are familiar with. While ((inputLine = in.Today’s programming languages are very robust in supporting many use cases, including web scraping. StringBuilder response = new StringBuilder() ("Response code: " + responseCode) īufferedReader in = new BufferedReader(new InputStreamReader(con.getInputStream())) Int responseCode = con.getResponseCode() HttpURLConnection con = (HttpURLConnection) obj.openConnection() Ĭon.setRequestProperty("User-Agent", "Mozilla/5.0") Use the Java HttpURLConnection class to send HTTP to connect requests.

You need to send an HTTP request to the server in order to scrape data from the web page. Check all the names of the elements to scrape them properly. Right-click the page that you want to scrape and select inspect element. Step 2: Inspect the page you want to scrape Here's how you can add dependencies using Maven You can use Maven or Gradle to manage the dependencies. Apache Commons Lang: Provides a complete set of utilities for working with strings, arrays, and other common data types.Jsoup: A great library to work with parse HTML and extract data from websites.In order to get started, create a new project and import the required Java libraries:

Step 3: Send an HTTP request and scrape the HTML.Step 2: Inspect the page you want to scrape.Use a scalable architecture that can handle large volumes of data and is easy to maintain over time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed